Claude Skills, Commands, Agents toward a unified mission

The Pain of Prompts

When working with AI assistants, two friction points emerge repeatedly: the burden of writing long, detailed prompts for specialized tasks, and the need to reuse these prompts across multiple sessions.

Imagine explaining your dietary restrictions at every restaurant you visit. You’re allergic to shellfish but mollusks are fine. You can handle butter but not milk. Gluten-free, except oats are okay. Every time, you face a choice: either recite the full explanation from scratch while the waiter scribbles notes and your dinner companion waits, or hand over a printed card you’ve prepared. But you can’t have just one card—you need different versions for different cuisines, and you need to remember which card applies to Japanese versus Italian versus bakeries. The cards themselves become a burden: keeping them updated, choosing the right one, wondering if this particular card covers the specific situation.

Meanwhile, what you actually want is to order food and enjoy conversation. You don’t want to be stuck in this recurring explanation ritual, pulling you out of the moment every time.

Such is a perennial problem of our now daily interaction with AI. Every time you want AI to perform a specific task—whether it’s formatting a document according to brand guidelines, analyzing data using a particular methodology, or following a specific workflow—you face the choice of either typing out lengthy instructions again or managing a personal library of prompt templates. This creates cognitive overhead and disrupts the flow of work, particularly for tasks you perform regularly.

The Claude skills feature arrived recently to tackle this problem. But here’s the puzzle: skills, slash commands, and agents are all essentially just prompts under the hood—markdown files containing instructions. Why did Anthropic initially treat them as fundamentally different features? Why are they now merging skills and commands? What does this evolution reveal about the deeper challenge of human-AI interaction, and where should this technology ultimately go?

Finally, We’ve Some Skills

Given the problems of prompt management, it seems odd that a solution came this late—though it only partially solves the problem (we’ll address that later).

Skills began as internal tools designed to solve a practical need: teaching Claude to generate common file formats like PDF, Word, PowerPoint, and Excel. These skills are essentially instructions to create those formats, sometimes including executable code. Seeing how useful they proved internally, the team released them to the public in October 2025. Two months later, on December 18, 2025, Anthropic announced that Skills are now an open standard, making them portable not only across different Claude platforms but also potentially adoptable by OpenAI and other companies.

Technical implementation: Skills are “folders of instructions, scripts, and resources that Claude loads dynamically to improve performance on specialized tasks.” More specifically, a skill is a directory containing a SKILL.md file (essentially a prompt) with organized folders that give Claude additional capabilities.

The key architectural principle is progressive disclosure. Each SKILL.md has a YAML section containing the skill’s name and description. This metadata is what Claude loads into its context window: What skills do I have and what can they do? When a user makes a request, Claude determines which skills (yes, potentially multiple) are relevant to the task and applies them.

Slash commands, by contrast, were introduced much earlier as a feature in Claude Code. They are defined as “frequently used prompts stored as Markdown files that Claude Code can execute.” They can be stored project-specifically in .claude/commands/ or globally in ~/.claude/commands/. Unlike skills, which Claude invokes autonomously, slash commands require explicit invocation by the user.

This historical sequence matters. Commands came first because they mapped onto our existing mental model: computers execute what you tell them. Skills came later because they attempted something more ambitious: computers that understand what you mean and autonomously choose how to help.

Agents or subagents use a similar structure worth mentioning here. You define agents as Markdown files (again, basically prompts) stored under /agents. The key difference is that agents can run in parallel and maintain their own context windows. This design allows agents to focus on different areas independently and is well-suited for scenarios where large volumes of information need processing.

Intent Matching: The Intelligence behind Skills

The defining characteristic of skills is intent matching—Claude’s ability to understand what you want and proactively load the appropriate skill without explicit instruction.

Consider this example: If you say “polish:” followed by some text, Claude knows you’re not talking about nail polish. This is intent matching. It doesn’t ask a specific trigger word. It tries to understand what you intend to do. For example, when you ask AI to “clean up this text,” Claude can:

- Scan available skills

- Match your intent against skill descriptions

- Proactively suggest or use the polish skill

This is akin to asking your dog to fetch an object. The dog doesn’t rely purely on the word “fetch” but on a combination of your tone, body language, context, and learned verbal cues. Instead of “fetch”, you may say “Go get it!”, “Catch!”, “Get the ball!” You may use another language. And you may cement the intent through a variety of ways such as pointing at the object, excited tone and body language, or simply holding up the toy. The strongest signal is usually the throwing motion itself, regardless of words.

Intent matching is an intelligent behavior because it needs to collect multiple clues to determine your true intent. If you’re just lying on the couch holding a beer and saying “go get it,” your dog understands it refers to the football game you’re watching, not an action command.

If your dog only responds to the exact command “fetch” and nothing else, that’s robot-like behavior. It is a slash command.

Why Commands Remain: The Reliability Problem

Despite the elegance of intent matching, slash commands serve an essential purpose because intent matching is not always reliable.

How does Claude’s skill-matching actually work under the hood? What triggers skill loading? Anthropic has not revealed the actual technical mechanism of intent matching. But there are evidences that reveal significant reliability issues. Developer Scott Spence tested Claude Code’s skill activation across 200+ prompts and found that basic intent matching achieves only 20% success rate. Even when a user’s request perfectly matches a skill’s description, Claude frequently “sees it, acknowledges it mentally, then completely ignores it and barrels ahead with implementation.”

Slash commands suffer no such failure mode. When you invoke a slash command, it will located the command and do exactly as instructed—every single time.

Now you may be asking: if slash commands are this reliable, why do we need skills that work only one in five times?

The answer lies in what each mechanism demands from the user. Slash commands require precise knowledge—you must know the command exists and remember its exact name. Consider the movie Wolfs, starring Brad Pitt and George Clooney as fixers who clean up inconvenient situations. If you need to dispose of a dead body, you must know the specific phone number to call the cleaner. The slash command is exactly that phone number—you must know it exists and dial it exactly. No phone number, no cleaning job.

This is computer interaction’s original paradigm. The first operating systems (UNIX, DOS) required users to memorize commands and their arcane arguments. This design favored professionals who could invest in learning command syntax to achieve maximum efficiency. It’s why most software developers, and virtually all computer hackers still prefer command-line tools: precision and speed trump ease of use.

Skills require no such memorization. You don’t need to know whether a “create pdf” skill exists. If it does, your task gets handled better; if it doesn’t, Claude still attempts the work using its base capabilities. The system degrades gracefully rather than refusing to operate.

This reveals a fundamental tension in human-AI interaction:

When you express intent and let AI interpret, you gain contextual intelligence at the cost of reliability. The AI might do exactly what you meant, or it might miss entirely. You don’t need to memorize anything, but you can’t guarantee outcomes.

When you specify exact actions, you gain certainty at the cost of cognitive overhead. The AI will execute precisely as instructed, but you must know what exists and remember how to invoke it.

Think of a skill as a set of best practices or operating procedures for your domain. When you assign a team member to the job, whether they follow these SOPs depends on their understanding of the task. Sometimes they do; sometimes they don’t. Commands are like hiring an external consultant for a surgical operation: they always follow rigid routines and generate highly predictable results.

For exploratory work and general assistance, skills offer contextual intelligence at the cost of reliability. For critical workflows demanding precision—deployment scripts, data transformations, compliance checks—slash commands provide the certainty that the exact procedure will execute as written.

No amount of technical refinement eliminates this trade-off. It’s not a bug in the implementation—it’s a feature of the problem space. The question isn’t whether to choose intelligence or precision, but how to fluidly move between them depending on context.

Toward Compositional Behavior

Recognizing this tension, Anthropic recently moved to merge skills and slash commands into a unified system. Both now support two invocation modes: automatic (Claude decides when to use based on intent) and explicit (you call it directly by name). You can still organize them in separate folders if you prefer, but under the hood they’re treated identically.

This convergence didn’t emerge from technical necessity—it emerged from recognizing that the skill-versus-command distinction created more confusion than clarity. The real distinction isn’t between two types of capabilities, but between two modes of invocation. Every specialized capability benefits from both modes: sometimes you want Claude to intelligently decide when to apply it, sometimes you need to force its execution.

Whether this merger persists or gets revised in future iterations remains to be seen. At the time of this writing, the convergence is not yet fully acknowledged or implemented across all of Anthropic’s platforms. The implementation details may continue to evolve. But the underlying tension it attempts to resolve represents something fundamental about how humans interact with capable AI systems.

This is what I call compositional behavior; it demands a balance between intelligent flexibility and reliable precision.

Consider what happens when you decide to “make dinner.” This idea fires up a long chain of actions that are not only intelligent in themselves but also highly flexible: check grocery inventory, determine the menu, prepare the ingredients, cook the meal, present the meal, etc.

The first thing we do is analyze intent: would it be a regular dinner, how many people will be dining, or is it a special occasion like an anniversary where fancy food or other arrangements are in order?

The sequence of actions also needs to be flexible. The problem with rigidly defined routines is that any hurdle in any step can derail the entire project. You set your preferred grocery to a certain Whole Foods, and when you arrive you see it’s closed for renovation. What happens? Do you drive another five miles to s Safeway or go home and try to make do with whatever’s available? It’s quite likely you have some food in the fridge, and although it’s not exactly the ingredients you need, you can adapt the plan to fit what you already have. How much adaptation is appropriate? This requires carefully balancing different factors—an act of intelligence.

If you’re not the type who bothers about cooking, this is the same problem in project management. In typical project management work, you make plans and hope they work. But your plans are not rigid workflows that will collapse if one person fails to show up or something goes over budget. As a project manager, you collect information to gain understanding of the situation and take appropriate actions to bring things back on track.

Where are we now when we ask Claude to do this?

Currently, skills operate at a single level of invocation—you cannot call another skill from within a skill. What this means is when you ask the cleaner to do a job, he cannot call another specialist and act as a general contractor. Imagine an organization where the CEO has to assign work for everyone. How big do you think this organization can afford to be?

If you’ve divided the work into room cleaning and body transportation and already written individual skills for them, you cannot simply call those skills from your cleaner skill. You have to either duplicate those methodologies or orchestrate these skills externally in sequence.

With the merging of skills and commands, we may be better prepared to build these complicated sequences of work. Here’s the vision:

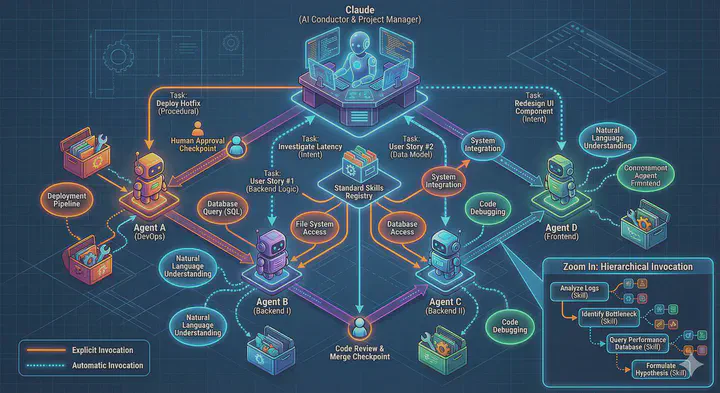

A project gets divided into different units of work (depending on whether it’s predictive or iterative, it could be a work package, a user story, etc.), and these units can be skill-oriented and assigned to agents. For obvious reasons, we can have multiple agents equipped with the same skills (so they can run in parallel), and we can have different skills equipped by the same agent.

Now imagine Claude as the project manager that coordinates these agents, assigns them work in conjunction with the skills needed. Each agent operates with both the flexibility to interpret intent (using skills automatically) and the precision to execute specific procedures (calling skills explicitly).

To facilitate this vision, we need robust mechanisms for skills to call other skills, agents to call other agents—in other words, multiple levels of invocation. We also need human intervention placed at strategic points. This is not yet a reality, and we may need a long time to finally get there. But the merger of skills and commands is a step in this direction, acknowledging that the same capability needs both automatic intelligence and explicit control depending on the level of orchestration.

The Path Forward

The evolution of AI application demonstrates this: we’re not building static tools, we’re negotiating the shape of human-AI collaboration. The technical implementations will continue evolving. What persists is the underlying challenge: how do we balance the efficiency of letting AI interpret our intent against the necessity of forcing specific behaviors when stakes are high?

This same tension appears in the compositional behavior we’ll need for complex tasks: when to trust Claude’s autonomous orchestration of multiple capabilities, when to specify rigid workflows. The answer isn’t purely technical. It’s about understanding what we’re actually trying to accomplish and choosing the appropriate level of control.

The dietary restriction analogy from the beginning captures this perfectly. What we ultimately want is neither to explain from scratch every time nor to manage a library of rigid instruction cards. What we want is a dining companion who learns our preferences, understands context, makes good decisions most of the time—but can receive explicit instructions when the situation demands it.

That’s the direction: AI systems that default to intelligent interpretation but gracefully accept explicit control. Systems that compose capabilities fluidly across multiple levels of abstraction. Systems that make the distinction between automatic and explicit invocation as natural as the difference between “make dinner” and “follow this exact recipe.”

Sources: